The automotive industry is undergoing a once-in-a-century transformation - from electrification and autonomy to software-defined vehicles and connected ecosystems. This transformation demands not just faster and smarter compute, but also efficient, reliable, and real-time decision-making under extreme constraints of power, cost, and safety. At Vellex, we believe analog compute solutions are emerging as a critical enabler of this future, unlocking performance that purely digital approaches cannot match.

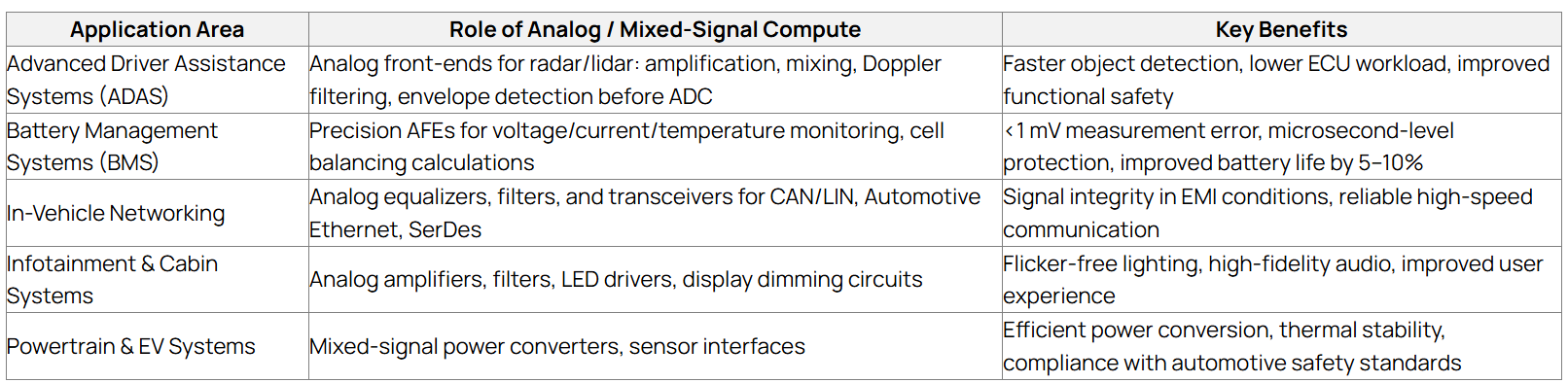

Analog compute in automotive refers to processing performed in the analog or mixed-signal domain, often near or within the sensor itself, before full digitization. This includes:

A modern Level 2+ ADAS system can produce 4-5 TB of data per hour. Moving and processing that data digitally consumes enormous power, generates heat, and introduces latency, all of which are unacceptable in safety-critical systems. Analog compute solutions address these challenges head-on:

To demonstrate the power of analog compute, consider a typical radar ECU design for autonomous driving. Traditional designs rely on wideband ADCs feeding heavy digital signal processing chains for clutter filtering. By integrating Analog compute front-end, key operations such as clutter rejection and Doppler pre-processing can be performed before digitization. This approach can deliver:

This optimization not only improves functional safety margins but also reduces thermal load and BOM cost.

From a business perspective, analog compute directly translates to better ROI for automakers and suppliers. Reduced power consumption leads to smaller heat sinks, lower cooling costs, and lighter ECUs - all of which cut vehicle weight and BOM cost. The ability to shrink digital processing requirements can save up to 20–30% in ECU cost per vehicle. For EV manufacturers, improved efficiency translates into extended range, a powerful differentiator in a competitive market. Overall, analog compute helps OEMs launch safer, more feature-rich vehicles faster, while staying within strict cost and power budgets.

Safety is non-negotiable in automotive design, and analog compute plays a critical role in meeting functional safety targets. Processing signals closer to the source reduces latency, which can mean several extra meters of stopping distance at highway speeds. Analog circuits also enable real-time fault monitoring, redundancy checks, and built-in self-test (BIST) capabilities that align with ISO 26262 ASIL-D requirements. This ensures that failures are detected early and mitigated before they can lead to hazardous events.

For the end user, analog compute means a more responsive, safer, and enjoyable driving experience. Drivers benefit from smoother ADAS interventions, faster emergency braking, and longer EV range. Passengers experience higher-quality audio, flicker-free lighting, and more reliable infotainment systems. Consumers also indirectly benefit from lower vehicle costs, as OEMs can pass along savings from more efficient, integrated electronics.

The next wave of innovation is analog in-memory compute (AiMC) and in-sensor compute. Crossbar-based AiMC can execute matrix-vector multiplications in O(1) time, offering 50–100x better performance-per-watt than conventional digital AI accelerators. For cameras, in-sensor compute can perform operations like Sobel edge detection or HDR compression before leaving the sensor, reducing bandwidth needs by up to 60%.

As vehicles evolve into high-performance, AI-driven machines, analog compute is no longer a niche, it is a necessity. By blending analog efficiency with digital intelligence, we can deliver safer, greener, and more responsive vehicles. At Vellex, we are proud to provide this analog intelligence revolution for the automotive world.

READ MORE